On the day before Christmas, when markets were relatively quiet, a major deal shook the artificial intelligence computing race. Nvidia reportedly spent $20 billion to license technology from chip startup Groq and recruit key members of its team, including the company’s CEO. That CEO previously helped Google develop what has become one of the leading alternatives to Nvidia’s AI processors.

Despite the significance of the move, the deal has largely flown under the radar in recent months. It may have been overshadowed by the holiday season or by the wave of other investments and partnerships announced by the world’s most valuable company over the past year.

However, attention is expected to return next week when Nvidia hosts its annual GTC conference in San Jose, California. Originally known as the GPU Technology Conference, the four-day event is one of the most important gatherings in the AI industry.

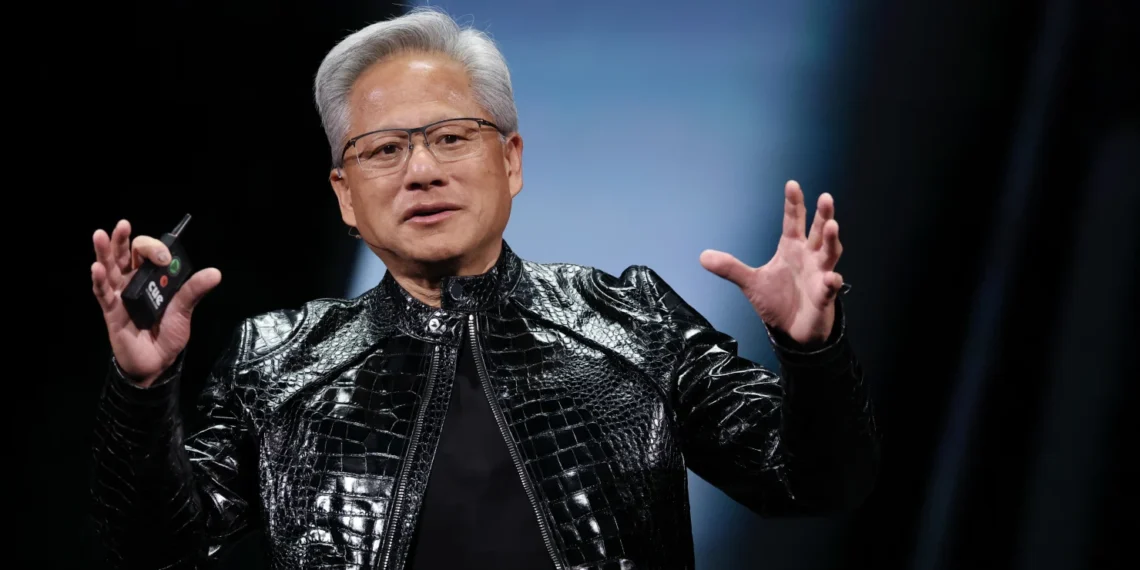

The conference will take place at the San Jose McEnery Convention Center, while Monday’s keynote from Nvidia CEO Jensen Huang will be held at the nearby SAP Center, home of the NHL’s San Jose Sharks. The venue reflects Huang’s rock-star reputation in the tech world, complete with his trademark leather jacket.

During the event, Nvidia is expected to reveal more about how it plans to integrate Groq’s chip technology into its already dominant AI computing ecosystem.

“I’ve got some great ideas that I’d like to share with you at GTC,” Jensen said on the chipmaker’s late February earnings call.

Those ideas will likely be among the highlights of a conference often referred to as the “Super Bowl of AI.” Nvidia is also expected to provide updates on its GPU roadmap, including details about the next-generation Vera Rubin family of processors.

Why the Groq deal matters

Wall Street analysts believe Nvidia plans to use Groq’s technology to develop a new type of chip focused on AI inference — the process of running trained AI models in everyday applications.

Inference is becoming an increasingly important part of the AI ecosystem because it generates revenue for data-center operators and cloud providers that deploy AI models for users.

Nvidia’s GPUs are widely recognized as the performance leader for AI training, the stage where models process vast amounts of data to learn patterns before being deployed in real-world applications. This dominance in training has been a major driver behind Nvidia’s explosive growth in recent years.

The inference market, however, is far more competitive. As AI adoption spreads across industries, companies are searching for cost-effective chips capable of handling the massive demand for running AI models.

Several competitors are trying to gain ground.

Advanced Micro Devices (AMD), Nvidia’s biggest GPU rival, has begun gaining traction in inference and recently announced a high-profile partnership with Meta. At the same time, major technology companies are developing their own custom chips designed specifically for inference workloads.

Google’s Tensor Processing Units (TPUs) are among the strongest challengers to Nvidia. Their reputation has grown with the success of Google’s Gemini chatbot, which runs on TPUs. Google designs these chips in collaboration with Broadcom.

Amazon is also pushing its in-house Trainium chip, claiming it performs well in both training and inference. The AI startup Anthropic, known for its Claude model, uses Trainium chips but also relies on TPUs and has struck a deal with Nvidia as well.

Another emerging player is Cerebras, an AI chip startup preparing for an initial public offering. Oracle co-CEO Clay Magouyrk recently mentioned Cerebras during an earnings call, highlighting its growing visibility in the market.

Nvidia still dominates inference

Although competition is intensifying, Nvidia remains a powerful force in inference computing.

In 2024 the company revealed that about 40% of its revenue came from inference workloads. At last year’s GTC conference, Huang told analysts that “the vast majority of the world’s inference is on Nvidia today.”

More recently, during Nvidia’s February earnings call, CFO Colette Kress pointed out that industry publication SemiAnalysis had “declared Nvidia inference king,” noting that the company’s new Grace Blackwell GPUs deliver major performance gains compared with the earlier Hopper architecture.

Where Groq fits in

Nvidia’s decision to spend a reported $20 billion to license Groq’s technology and recruit its engineers suggests the company believes it can further strengthen its position in inference.

Interestingly, Nvidia did not acquire the entire Groq company. The licensing agreement is non-exclusive, likely to avoid potential antitrust scrutiny. Groq continues to operate its own cloud service built around its specialized inference chips.

Still, the deal brought several important people to Nvidia — most notably Groq founder and former CEO Jonathan Ross.

Before launching Groq in 2016, Ross worked at Google and helped develop the original TPU. He has now joined Nvidia as chief software architect.

Groq built chips known as LPUs (Language Processing Units), specifically designed for inference tasks. Ross has repeatedly said that Groq never intended to compete with Nvidia in AI training. Instead, the company focused entirely on making inference faster and more efficient.

In interviews over the years, Ross explained that Groq aimed to design chips optimized for real-time AI tasks where speed and efficiency matter most.

One reason Nvidia’s GPUs excel at training is their ability to perform enormous numbers of calculations simultaneously — a capability known as parallel processing. AI training requires analyzing vast datasets and identifying patterns, which involves performing many calculations at the same time.

Traditional CPUs execute tasks sequentially, while GPUs process them in parallel, making GPUs far better suited for AI training workloads.

Another advantage of Nvidia’s GPUs is their flexibility, largely due to the company’s CUDA software platform. CUDA — short for Compute Unified Device Architecture — allows GPUs to handle a wide variety of workloads, including inference.

When an AI model is running in inference mode and receives a prompt, it uses patterns learned during training to generate a response one token at a time. The model determines each piece of output based on probabilities derived from its training data.

However, inference workloads have different requirements than training. That difference is where Groq’s design philosophy comes in.

Groq’s LPUs rely on SRAM, a form of short-term memory located directly on the chip’s processing engine. This design significantly increases speed for certain tasks.

Nvidia’s GPUs, by contrast, use high-bandwidth memory (HBM) located next to the processor rather than directly on it. The global surge in AI demand has created shortages of HBM, driving memory prices sharply higher.

Ross described the distinction in a podcast interview with wealth advisory Lumida in 2023:

“GPUs are really great at training models. When somebody wants to train a model, I’m just like, ‘Just use GPUs. Don’t talk to us,’”

“But the big difference is, when you’re running one of these models — not training them, running them after they’ve already been made — you can’t produce the 100th word until you’ve produced the 99th,” he added. “So, there’s a sequential component to them that you just simply can’t get out of a GPU. … It’s how quickly you complete the computation, not just how many computations you can complete in parallel. And we do the computations much faster.”

Complementary technologies

Ross has also said he believes Groq’s chips could work alongside Nvidia GPUs rather than replace them.

In an interview on The Capital Markets podcast in February 2025 — months before he joined Nvidia — Ross explained how the technologies could complement each other:

“We’re actually so crazy fast compared to GPUs that we’ve actually experimented a little bit with taking some portions of the model and running it on our LPUs and letting the rest run on GPU. And it actually speeds up and makes the GPU more economical. So, since people already have a bunch of GPUs they’ve deployed, one use case we’ve contemplated is selling some of our LPUs to, sort of, nitro boost those GPUs.”

That comment has attracted renewed attention now that Ross has joined Nvidia. Many analysts are eager to see how Huang plans to combine Groq’s technology with Nvidia’s existing hardware platform.

Following the Mellanox playbook

As AI technology evolves, specialization in chip design is becoming more common. Nvidia appears to be following a strategy similar to its acquisition of networking company Mellanox six years ago.

During the February earnings call, Huang suggested Groq could play a similar role.

“What we’ll do is we’ll extend our architecture with Groq as an accelerator in very much the ways that we extended Nvidia’s architecture with Mellanox,” Jensen said.

That earlier acquisition proved extremely successful. Nvidia’s networking technology has become a critical component of modern AI infrastructure, helping transform the company into a full-stack AI platform rather than simply a chip manufacturer.

In Nvidia’s fiscal 2026 fourth quarter alone, its networking business generated about $11 billion in revenue, roughly matching AMD’s total company revenue.

Overall, Nvidia’s revenue surged 73% year over year to $68.13 billion in that quarter.

Just a few years ago, Nvidia’s networking business was on track to generate about $10 billion in revenue over an entire year. Today, it produces around $11 billion in just a single quarter, growing alongside Nvidia’s booming GPU business.

Investors are now hoping the Groq partnership could deliver similar long-term benefits.

The first clues about that possibility may arrive next week when Nvidia’s GTC conference begins.

Disclaimer: The views expressed on Realmwire are for informational purposes only and should not be considered investment advice. No specific outcome or profit is guaranteed.